Lambda Layers & Runtime API: More Modular, Flexible Functions

Lambda layers and runtime API are two new feature of AWS Lambda which open up fun possibilities for customizing the Lambda runtime and enable decreased duplication of code across Lambda functions. Layers lets you package up a set of files and include them in multiple functions. Runtime API provides an API for interacting with the Lambda service function lifecycle events which lets you be much more flexible about what you run in your Lambda.

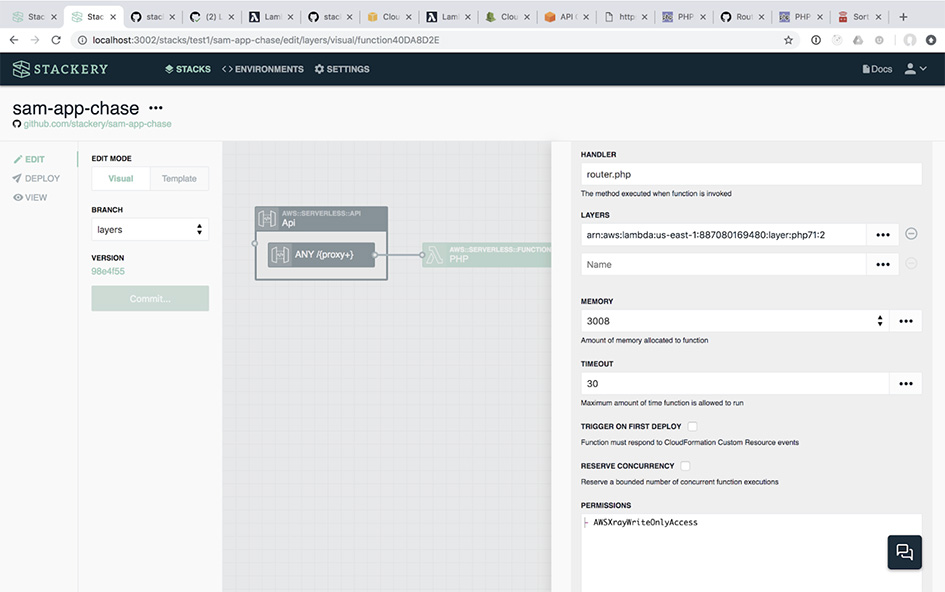

Layers is aimed at a common pain point teams hit as the number of Lambdas in their application grows. Today, we see customers performing gymastics in order to compile binaries or package reusable libraries inside functions. One downside of this behavior is that it is difficult to ensure all functions have the latest version of the dependency, leading to inconsistencies across environments or over-complicated error-prone packaging processes. For example, at Stackery we compile git and package it into some our functions to enable integration with GitHub, GitLab, and CodeCommit. Prior to layers upgrading that dependency involved each developer responsible for a function repackaging those files in each related function. With layers, it's much easier to standardize those technical and human dependencies and the combination of layers and runtime API enables a cleaner separation of concerns between business logic function code and cross-cutting runtime concerns. In fact, in Stackery, adding a layer to a function is just a dropdown box. That feels like a little thing, but it opens up several interesting use cases:

1. Bring Your Own Runtime

AWS Lambda provides 6 different language runtimes (Python, Node, Java, C#, Go, and Ruby). Along with layers comes the ability to customize specific files that are hooked into the Lambda runtime. This means you can gasp run any language you want in AWS Lambda. We've been aware that there is no serverless "lock in" for some time now but with these new capabilities you are able to fully customize the Lambda runtime.

To implement your own runtime you create a file called bootstrap in either a layer or directly in your function. It must have executable permissions (chmod +x).

Your bootstrap custom runtime implementation must perform these steps:

-

Load the function handler using the Lambda handler configuration. This is passed to

bootstrapthrough the_HANDLERenvironment variable. -

Request the next event over http:

curl "http://${AWS_LAMBDA_RUNTIME_API}/2018-06-01/runtime/invocation/next" -

Invoke the function handler and capture the result

-

Send the response to the Lambda service over http:

curl -X POST "http://${AWS_LAMBDA_RUNTIME_API}/2018-06-01/runtime/invocation/$INVOCATION_ID/response" -d "$RESPONSE"

It's pretty much guaranteed there will be a bunch of new languages for you to deploy any minute through layers. At Stackery we're debating whether a PHP or Haskell layer would be of greater benefit.

2. Shared Binaries and Libraries

Serverless apps often rely on reusable libraries and commands which the business logic code calls into. For example, our engineering team runs git inside some of our functions, which we package alongside our node.js function code. Scientific libraries, shell scripts, and compiled binaries are a few other common examples. While it's nice to be able to package any files along with our code when these dependencies are used across many functions, need to be compiled, or are updated frequently you can end up hitting increasing function build complexity and team distractions.

With layers you can extract these shared dependencies and register that package within the account. In Stackery's function editor you'll see a list of all the layers in your account and can apply them to that function. This simplifies the management and versioning of reusable libraries used by your functions.

The layers approach has the added benefit that it's easier to keep dependencies in sync across all your functions and to upgrade these dependencies across your microservices. Layers provides a way to reduce duplication in your function code and shared libraries in layers are only counted once against AWS storage limits regardless of how many functions use the layer. Layers can also be made public so it's likely we'll see open source communities and companies publish Lambda layers to make it easier for developers to run software in Lambda.

Serverless Cross-Cutting Concerns

By now it should be clear that layers unlock some exciting possibilities. Let's take a step back and note this is one aspect of a broader set of good operational hygene. Microservices have major benefits over monolithic architecture. The pieces of your system get simpler. They can be developed, deployed, and scaled independently. On the other hand, your system consists of many pieces, making it more challenging to keep the things that need to be consistent in sync. These cross-cutting concerns, such as security, quality, change management, error reporting, observability, configuration management, continuous delivery, and environment management (to name a few) are critical to success, but addressing them often feels at odds with the serverless team's desires to focus on core business value and avoid doing undifferentiated infrastructure work.

Addressing cross-cutting concerns for engineering teams is something I'm passionate about since throughout my career I've seen the huge impact (both positive and negative) it has on an engineering orgs' ability to deliver. Stackery accelerates serverless teams, by addressing the cross-cutting concerns that are inherent in serverless development. This drives technical consistency, increases engineering focus, and multiplies velocity. This is the reason I'm excited to integrate Lambda layers into Stackery; now improving the consistency of your Lambda runtime environments is as easy as selecting the right layers from a drop down. It's the same reason we're regularly adding new cross-cutting capabilities, such as Secrets Management, GraphQL API definition, and visual editing of existing serverless projects.

There's a saying in software that if something hurts you should do it more often, and typically this applies to cross-cutting problems. Best practices such as automated testing, continuous integration, and continuous delivery all spring from this line of thought. Solving these "hard" cross-cutting problems is the key to unlocking high velocity engineering - moving with greater confidence towards your goals.

Related posts