Self Healing Serverless Applications - Part 2

This blog post is based on a presentation I gave at Glue Conference 2018. The original slides: Self-Healing Serverless Applications -- GlueCon 2018. View the rest here. Parts: 1, 2, 3

This is part two of a three-part blog series. In the last post we covered some of the common failure scenarios you may face when building serverless applications. In this post we'll introduce the principles behind self-healing application design. In the next post, we'll apply these principles with solutions to real-world scenarios.

Learning to Fail

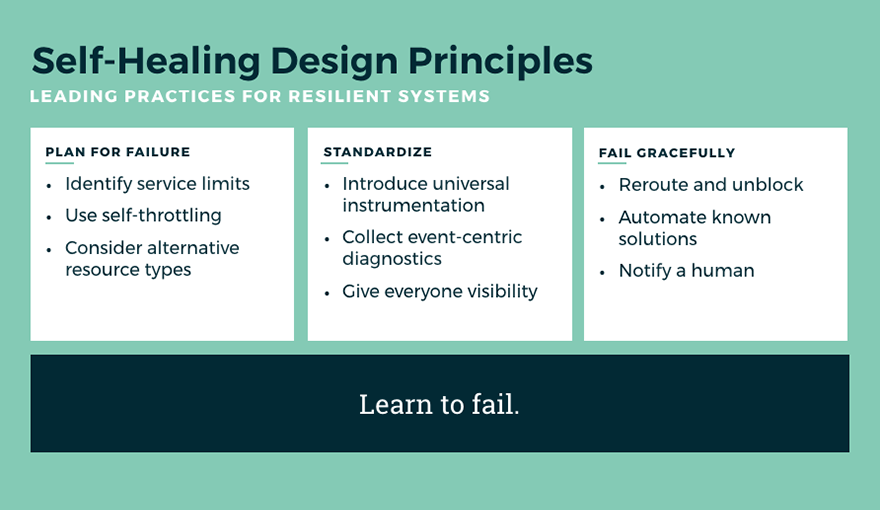

Before we dive into the solution-space, it's worth introducing the defining principles for creating self-healing systems: plan for failure, standardize, and fail gracefully.

Plan for Failure

As an industry, we have a tendency to put a lot of time planning for success and relatively little time planning for failure. Think about it. How long ago did you first hear about Load Testing? Load testing is a great way to prepare for massive success! Ok, now when did you first hear about Chaos Engineering? Chaos Engineering is a great example of planning for failure. Or, at least, a great example of intentionally failing and learning from it. In any event, if you want to build resilient systems, you have to start planning to fail.

Planning for failure is not just a lofty ideal, there are easy, tangible steps that you can begin to take today:

- Identify Service Limits: Remember the Lambda concurrency limits we covered in Part 1? You should know what yours are. You're also going to want to know the limits on the other service tiers, like any databases, API Gateway, and event streams you have in your architecture. Where are you most likely to bottleneck first?

- Use Self-Throttling: By the time you're being throttled by Lambda, you've already ceded control of your application to AWS. You should handle capacity limits in your own application, while you still have options. I'll show you how we do it, when we get to the solutions.

- Consider Alternative Resource Types: I'm about as big a serverless fan as there is, but let's be serious: it's not the only tool in the tool chest. If you're struggling with certain workloads, take a look at alternative AWS services. Fargate can be good for long-running tasks, and I'm always surprised how poorly understood the differences are between Kinesis vs. SQS vs. SNS. Choose wisely.

Standardize

One of the key advantages of serverless is the dramatic velocity it can enable for engineering orgs. In principle, this velocity gain comes from outsourcing the "undifferentiated heavy lifting" of infrastructure to the cloud vendor, and while that's certainly true, a lot of the velocity in practice comes from individual teams self-serving their infrastructure needs and abandoning the central planning and controls that an expert operations team provides.

The good news is, it doesn't need to be either-or. You can get the engineering velocity of serverless while retaining consistency across your application. To do this, you'll need a centralized mechanism for building and delivering each release, managing multiple AWS accounts, multiple deployment targets, and all of the secrets management to do this securely. This means that your open source framework, which was an easy way to get started, just isn't going to cut it anymore.

Whether you choose to plug in software like Stackery or roll your own internal tooling, you're going to need to build a level of standardization across your serverless architecture. You'll need standardized instrumentation so that you know that every function released by every engineer on every team has the same level of visibility into errors, metrics, and event diagnostics. You're also going to want a centralized dashboard that can surface performance bottlenecks to the entire organization -- which is more important than ever before since many serverless functions will distribute failures to other areas of the application. Once these basics are covered, you'll probably want to review your IAM provisioning policies and make sure you have consistent tagging enforcement for inventory management and cost tracking.

Now, admittedly, this standardization need isn't the fun part of serverless development. That's why many enterprises are choosing to use a solution like Stackery to manage their serverless program. But even if standardization isn't exciting, it's critically important. If you want to build serverless into your company's standard tool chest, you're going to need for it to be successful. To that end, you'll want to know that there's a single, consistent way to release or roll back your serverless applications. You'll want to ensure that you always have meaningful log and diagnostic data and that everyone is sending it to the same place so that in a crisis you'll know exactly what to do. Standardization will make your serverless projects reliable and resilient.

Fail Gracefully

We plan for failure and standardize serverless implementations so that when failure does happen we can handle it gracefully. This is where "self-healing" gets exciting. Our standardized instrumentation will help us identify bottlenecks automatically and we can automate our response in many instances.

One of the core ideas in failing gracefully is that small failures are preferable to large ones. With this in mind, we can oftentimes control our failures to minimize the impact and we do this by rerouting and unblocking from failures. Say, for example, you have a Lambda reading off of a Kinesis stream and failing on a message. That failure is now holding up the entire Kinesis shard, so instead of having one failed transaction you're now failing to process a significant workload. If we, instead, allow that one blocking transaction to fail by removing it from the stream and (instead of processing it normally) simply log it out as a failed transaction and get it out of the way. Small failure, but better than a complete system failure in most cases.

Finally, while automating solutions is always the goal with self-healing serverless development, we can't ignore the human element. Whenever you're taking an automated action (like moving that failing Kinesis message), you should be notifying a human. Intelligent notification and visibility is great, but it's even better when that notification comes with all of the diagnostic details including the actual event that failed. This allows your team to quickly reproduce and debug the issue and turn around a fix as quickly as possible.

See it in action

In our final post we'll talk through five real-world use cases and show how these principles apply to common failure patterns in serverless architectures.

We'll solve: - Uncaught Exceptions - Upstream Bottlenecks - Timeouts - Stuck steam processing - and, Downstream Bottlenecks

If you want to start playing with self-healing serverless concepts right away, go start a free 7-day trial of Stackery. You'll get all of these best practices built-in.

Related posts