Self Healing Serverless Applications - Part 1

This blog post is based on a presentation I gave at Glue Conference 2018. The original slides: Self-Healing Serverless Applications -- GlueCon 2018. View the rest here. Parts: 1, 2, 3

This is part one of a multi-part blog series. In this post we'll discuss some of the common failure scenarios you may face when building serverless applications. In future posts we'll highlight solutions based on real-world scenarios.

What to expect when you're not expecting

If you've been swept up in the serverless hype, you're not alone. Frankly, serverless applications have a lot of advantages, and my bet is that (despite the stupid name) "serverless" is the next major wave of cloud infrastructure and a technology you should be betting heavily on.

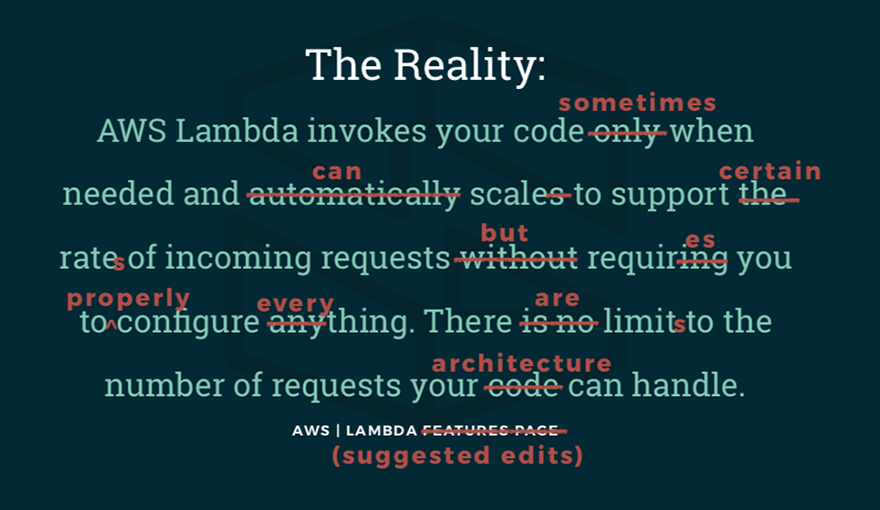

That said, like all new technologies, serverless doesn't always live up to the hype, and separating fact from fiction is important. At first blush, serverless seems to promise infinite and immediate scaling, high availability, and little-to-no configuration. The truth is that while serverless does offload a lot of the "undifferentiated heavy lifting" of infrastructure management, there are still challenges with managing application health and architecting for scale. So, let's take a look at some of the common failure modes for AWS Lambda-based applications.

Runtime failures

The first major category of failures are what I classify as "runtime" or application errors. I assume it's no surprise to you that if you introduce bugs in your application, Lambda doesn't solve that problem. That said, you may be very surprised to learn that when you throw an uncaught exception, Lambda will behave differently depending on how you architect your application.

Let's briefly touch on three common runtime failures:

- Uncaught Exceptions: any unhandled exception (error) in your application.

- Timeouts: your code doesn't complete within the maximum execution time.

- Bad State: a malformed message or improperly provided state causes unexpected behavior.

Uncaught Exceptions

When Lambda is running synchronously (like in a request-response loop, for example), the Lambda will return an error to the caller and will log an error message and stack trace to CloudWatch, which is probably what you would expect. It's different though when a Lambda is called asynchronously, as might be the case with a background task. In that event, when throwing an error, Lambda will retry up to three times. In fact, it will even try indefinitely when reading off of a stream, like with Kinesis. In any case, when a Lambda fails in an asychronous architecture, the caller is unaware of the error -- although there is still a log record sent to CloudWatch with the error message and stack trace.

Timeouts

Sometimes Lambda will fail to complete within the configured maximum execution time, which by default is 3 seconds. In this case, it will behave like an uncaught exception, with the caveat that you won't get a stack trace out in the logs and the error message will be for the timeout and not for the potentially underlying application issue, if there is one. Using only the default behavior, it can be tricky to diagnose why Lambdas are timing out unexpectedly.

Bad State

Since serverless function invocations are stateless, state must be supplied on or after invocation. This means that you may be passing state data through input messages or by connecting to a database to retrieve state when the function starts. In either scenario, it's possible to invoke a function but not properly supply the state which the function needs to properly execute. The trick here is that these "bad state" situations can either fairly noisily (as an uncaught exception) or fail silently without raising any alarms. When these errors occur silently it can be nearly impossible to diagnose, or sometimes to even notice that you have a problem. The major risk is that the events which trigger these functions have expired and since state may not be being stored correctly, you may have permanent data loss.

Scaling failures

The other class of failures worth discussing are scaling failures. If we think of runtime errors as application-layer problems, we can think of scaling failures as infrastructure-layer problems.

The three common scaling failures are:

- Concurrency Limits: when Lambda can't scale high enough.

- Spawn Limits: when Lambda can't scale fast enough.

- Bottlenecking: when your architecture isn't scaling as much as Lambda.

Concurrency Limits

You can understand why Amazon doesn't exactly advertise their concurrency limits, but there are, in fact, some real advantages to having limits in place. First, concurrency limits are account limits that determine how many simultaneously running instances you can have of your Lambda functions. These limits can really save you in scenarios where you accidentally trigger an unexpected and massive workload. Sure, you may quickly run up a tidy little bill, but at least you'll hit a cap and have time to react before things get truly out of hand. Of course, the flipside to this is that your application won't really scale "infinitely," and if you're not careful you could hit your limits and throttle your real traffic. No bueno.

For synchronous architectures, these Lambdas will simply fail to be invoked without any retry. If you're invoking your Lambdas asynchronously, like reading off of a stream, the Lambdas will fail to invoke initially, but will resume once your concurrency drops below the limit. You may experience some performance bottlenecks in this case, but eventually the workload should catch up. It's worth noting, by contacting AWS you can usually get them to raise your limits if needed.

Spawn Limits

While most developers with production Lambda workloads have probably heard of concurrency limits, in my experience very few know about spawn limits. Spawn limits are account limits on what rate new Lambdas can be invoked. This can be tricky to identify because if you glance at your throughput metrics you may not even be close to your concurrency limit, but could still be throttling traffic.

The default behavior for spawn limits matches concurrency limits, but again, it may be especially challenging to identify and diagnose spawn limit throttling. Spawn limits are also very poorly documented and, to my knowledge, it's not possible to have these limits raised in any way.

Bottlenecking

The final scaling challenge involves managing your overall architecture. Even when Lambda scales perfectly (which, in fairness is most of the time!), you must design your other service tiers to scale as well or you may experience upstream or downstream bottlenecks. In an upstream bottleneck, like when you hit throughput limits in API Gateway, your Lambdas may fail to invoke. In this case, you won't get any Lambda logs (they really didn't invoke), and so you'll have to be paying attention to other metrics to detect this. It's also possible to create downstream bottlenecks. One way this can happen is when your Lambdas scale up, but deplete your connection pool for a downstream database. These kind of problems can behave like an uncaught exception, lead to timeouts, or distribute failures to other functions and services.

Introducing Self-Healing Serverless Applications

The solution to all of this is to build resiliency with "Self-Healing" Serverless Applications. This is an approach for architecting applications for high-resiliency and for automated error resolution.

In our next post, we'll dig into the three design principles for self-healing systems:

- Plan for Failure

- Standardize

- Fail Gracefully

We'll also learn to apply these principles to real-world scenarios that you're likely to encounter as you embrace serverless architecture patterns. Be sure to watch for the next post!

Related posts