Injection Attacks: Protecting Your Serverless Functions

Security is Less of a Problem with Serverless but Still Critical

While trying to verify the claims made on a somewhat facile rundown of serverless security threats, I ran across Jeremy Daly’s excellent writeup of a single vulnerability type in serverless, itself inspired by a fantastic talk from Ory Segal on vulnerabilities in serverless apps. At first I wanted to describe how injection attacks can happen. But the fact is, the two resources I just shared serve as amazing documentation; Ory found examples of these vulnerabilities in active GitHub repos! Instead, it makes more sense to recap their great work before diving into some of the ways that teams can protect themselves.

A Recap on Injection Vulnerability

It might seem like a serverless function just isn’t vulnerable to code injection. After all, it’s just a few lines of code. How much information could you steal from it? How much damage could you possibly do?

The reality is, despite Lambdas running on a highly managed OS layer, that layer still exists and can be manipulated. To put it another way, to be comprehensible and usable to developers of existing web apps, Lambdas need to have the normal abilities of a program running on an OS. Lambdas need to be able to send HTTP requests to arbitrary URLs, so a successful attack will be able to do the same. Lambdas need to be able to load their environment variables, so successful attacks can send all the variables on the stack to an arbitrary URL!

The attack is straightforward enough: inside a user-submitted file name is a string that terminates in an escape-and-terminal command. The careless developer is parsing the files with a terminal command, which results in it being run.

What are the principles at work here?

It’s simple enough to say ‘sanitize your inputs’ but some factors involved here are a bit more complicated than that:

- Lambdas, no matter how small and simple, can leak useful information

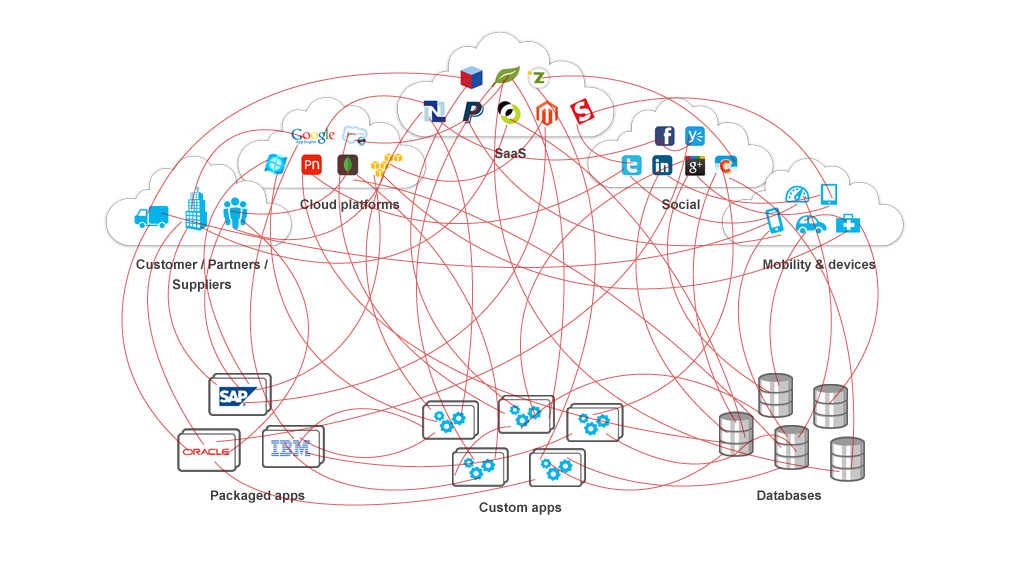

- There are many sources of events, and almost all of them could include user input

- With interdependence between serverless resources, user input can come from unexpected angles

- Alongside many sources of events, event data, and names, information can come in many formats

In case this should seem like a largely theoretical problem, note that Ory’s presentation used examples found in the wild on Github.

Solution 1: Secure Your Functions

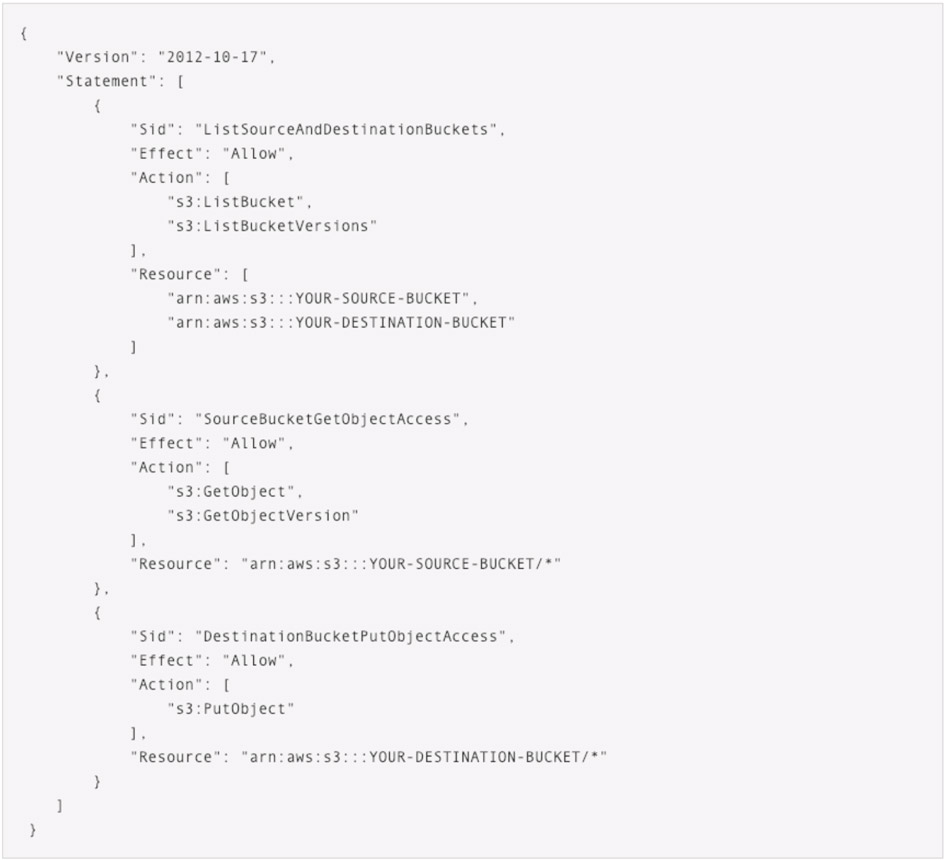

On Amazon Web Services (AWS), serverless functions are created with no special abilities within your other AWS resources. You need to give them permissions and connect them up to events from various sources. If your Lambdas need storage, it can be tempting to give them permissions to access your S3 buckets.

In this example from AWS, the permissions given by this policy only cover the two buckets we need for read/write. This is good!

If you’re using lambdas in diverse roles, this means not using single IAM policies for all your lambdas. It’s possible to generalize somewhat and re-use policies, but this takes some monitoring of its own.

How Stackery Can Help

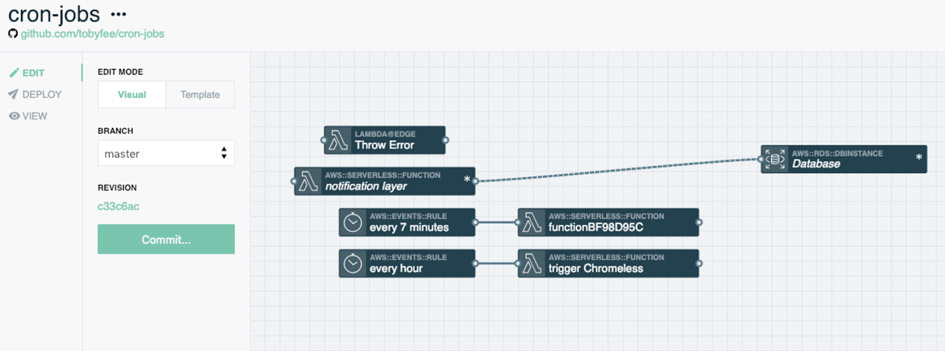

The creation and monitoring of multiple IAM roles for a single stack can get pretty arduous when done manually. I like writing JSON as much as the next person, but multiple permissions can also get tough to manage.

With Stackery, giving functions permissions to access a single bucket or database is as easy as drawing a line.

Even better, the Stackery dashboard makes it easy to see what permissions exist between your resources.

How Twistlock Can Help

Keeping a close eye on your permissions is a great general guideline, but we have to be realistic: dynamic teams need to make large, fast, changes to their stack and mistakes are going to happen. Without some kind of warning that our usual policies have been violated, there’s a good chance that vulnerabilities will go out to production.

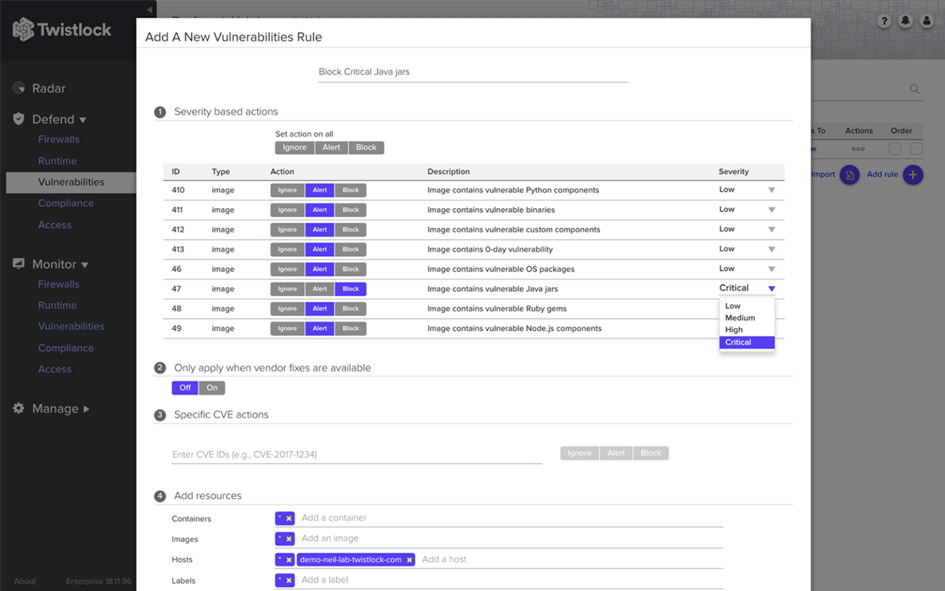

Twistlock lets you set overall policies either in sections or system-wide for where traffic should be allowed. It can generate warnings when policies are violated or even block traffic, for example between a lambda that serves public information and a database with Personally Identifiable Information (PII).

Twistlock can also scan memory for suspect strings, meaning that, without any special engineering effort, it can detect when a key is being passed around when it shouldn’t be.

Further Reading

Ory Segal has a blog post on testing for SQL injection in Lambdas using open source tools. Even if you’re not going to roll your own security, it’s a great tour of the nature of the attacks that are possible.

Stackery and Twistlock work great together, in fact, we wrote up a solution brief about it. Serverless architecture is rapidly becoming the best way to roll out powerful, secure applications. Get the full guide here.

Related posts