Building a Reddit Bot with Stackery

I've always wanted to build a Reddit bot, however, I didn't want to go through the hassel of actually setting up cloud based hosting for it to run on. One of the most powerful aspects of serverless architectures is how simple it is to implement a task pipeline. In this case, I created a fully live Reddit bot in about an hour, that scrapes the top posts from [/r/cooking](https://www.reddit.com/r/Cooking/) and emails them to me. It's easy to see how these atomic types of tasks can be chained together to create powerful applications. For example, with a bit more work, instead of an [AWS SNS topic](https://aws.amazon.com/documentation/sns/) we could feed the Reddit posts into an [AWS Kinesis Stream](https://docs.aws.amazon.com/streams/latest/dev/amazon-kinesis-streams.html), then attach consumer lambda functions to the stream to perform context analytics. One can see how this can apply to a CI/CD pipeline, and in fact we use similar processes with our own serverless continuous integration (CI) and continuous delivery (CD) pipeline. [Read more about Stackery's CI/CD here.](https://www.stackery.io/blog/building-a-ci-pipeline-with-stackery/)

I've always wanted to build a Reddit bot, however, I didn't want to go through the hassel of actually setting up cloud based hosting for it to run on. One of the most powerful aspects of serverless architectures is how simple it is to implement a task pipeline. In this case, I created a fully live Reddit bot in about an hour, that scrapes the top posts from [/r/cooking](https://www.reddit.com/r/Cooking/) and emails them to me. It's easy to see how these atomic types of tasks can be chained together to create powerful applications. For example, with a bit more work, instead of an [AWS SNS topic](https://aws.amazon.com/documentation/sns/) we could feed the Reddit posts into an [AWS Kinesis Stream](https://docs.aws.amazon.com/streams/latest/dev/amazon-kinesis-streams.html), then attach consumer lambda functions to the stream to perform context analytics. One can see how this can apply to a CI/CD pipeline, and in fact we use similar processes with our own serverless continuous integration (CI) and continuous delivery (CD) pipeline. [Read more about Stackery's CI/CD here.](https://www.stackery.io/blog/building-a-ci-pipeline-with-stackery/)

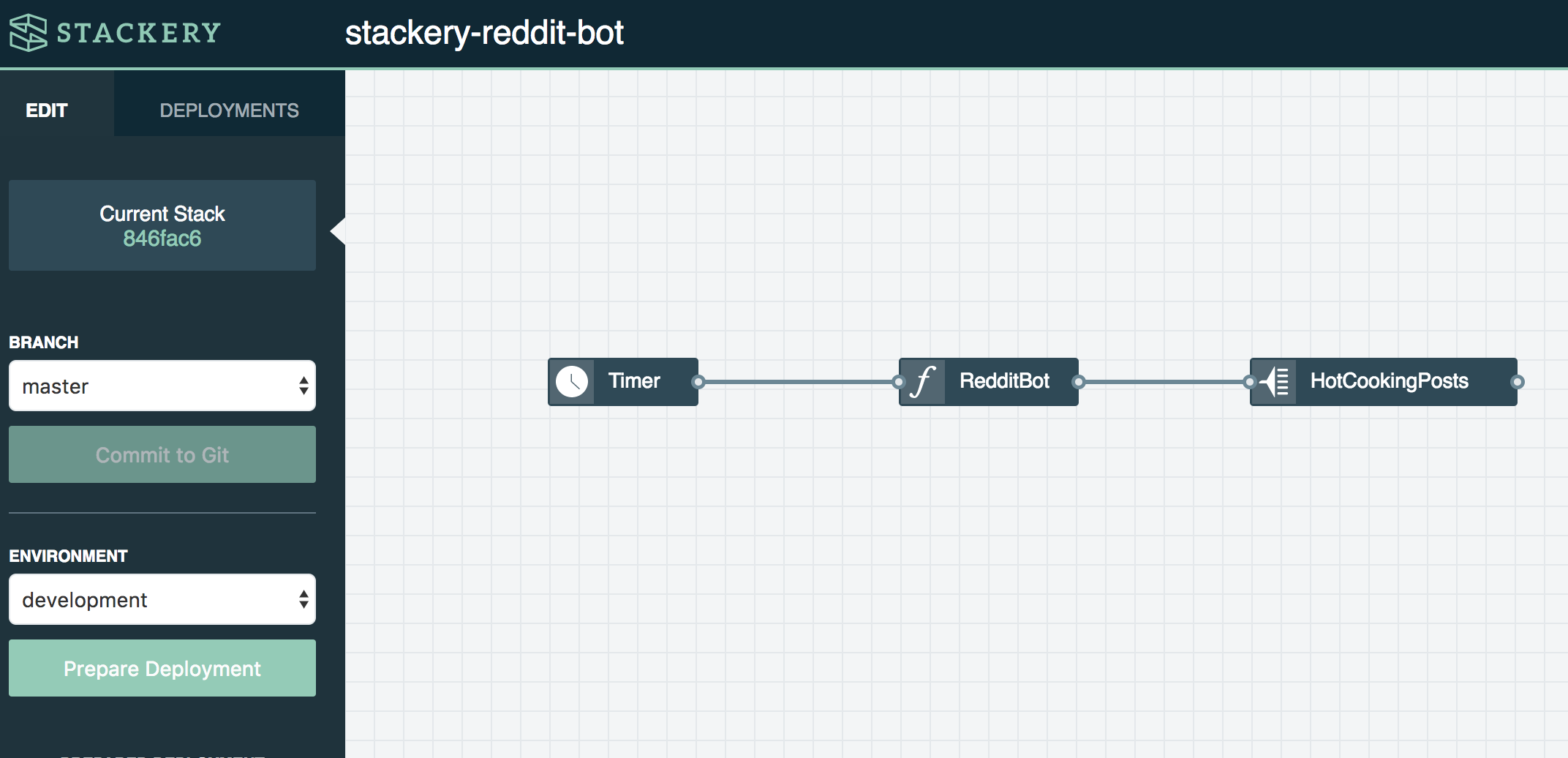

Overview of Components

- "Timer" node to ping a function, triggering the reddit bot to work once a day

- "RedditBot" node, a lambda function that once triggered, authenticates with reddit using the snoowrap library and scrapes the hot /r/cooking posts, sending along the good ones via SNS

- "HotCookingPosts" SNS topic node, an SNS topic that forwards all messages to my email address

Implementation Details

Create a reddit account for your bot. Then navigate to https://www.reddit.com/prefs/apps/ and select Create App, making sure you select "script" in the radios underneath the name. Note down the client id and client secret, these will go into the function configuration along with the reddit username and password for your bot account.

Configure a stack using the Stackery dashboard with 3 nodes:

Timer -> Function -> SNS Topic

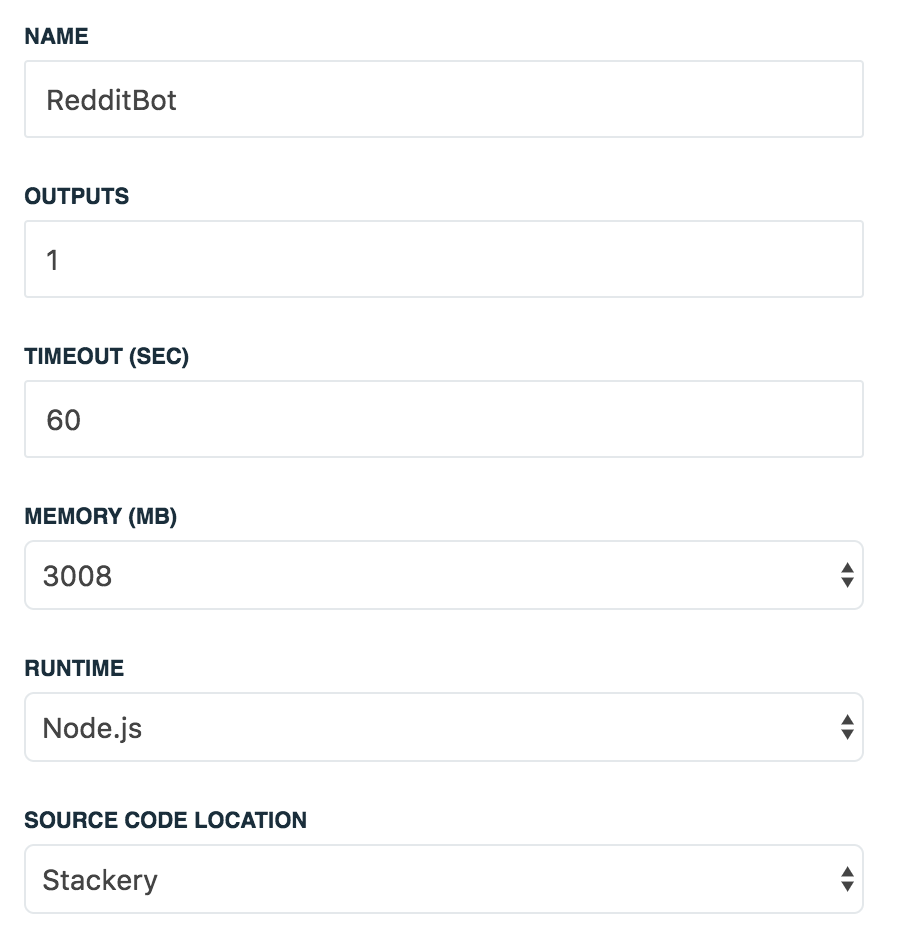

Attached to the function are some configuration values that are necessary for reddit's authentication mechanism. Stackery automatically includes certain information about a function based on what it's attached to (in this case, the SNS topic). Read more about the output port data here. We can leverage this when specifying the topic node ARN for forwarding on the selected posts, implemented in this file.

Function Settings:

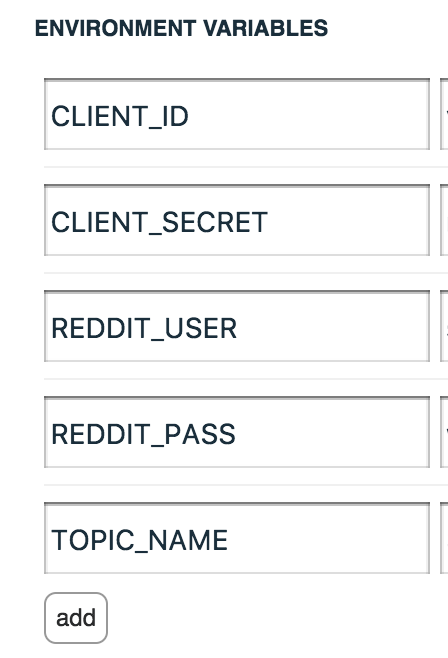

Configuration Environment Variables:

Fill in your saved client id, secret, Reddit bot username, and Reddit bot password and store under environment variable names in the function editor panel. For more information on how to create a deployment environment configuration, visit the Environment Configuration Docs. It's important not to add these sensitive variables directly, as they will then be committed to github, and (depending on your repository settings) exposed to the public. When added via an environment configuration, these key value pairs are automatically encrypted and stored in an S3 bucket on your AWS account.

The "bot" function will receive a timer event which then triggers it to scrape /r/cooking. The timer interval can be triggered every minute to test functionality, then I'd recommend changing it to a more sane interval.

The function looks through the hot submissions and any with > 50 comments get forwarded to the SNS topic. See the code for this here: https://github.com/stackery/stackery-reddit-bot

You can also insert log statements in your own code to debug the lambda function via Cloudwatch Logs (which you can easily get to the logs in from the function's metrics tab section in the Stackery deployments view).

Currently, the code sends a json object directly to email. This is done by navigating to your AWS accounts SNS service, to the topic that Stackery has automatically provisioned, and clicking the Create Subscription button, with the Protocol field set to email, and value as your email. For more on the capabilities of SNS visit Amazon's SNS Docs.

As you can see, it's really straightforward to build a Reddit bot (and many other types of bots) using serverless resources and Stackery's cloud management capabilities. Bots are functionally lightweight by nature, and fit easily into serverless architectures. With the composability of AWS Lambda, they can be orchestrated and chained together to perform a variety of tasks, ranging from emailing scraped posts off Reddit, to managing CI/CD.